How It Works

How does a computer understand you when you talk to it using everyday language?

Our approach was to use billions of lines of dialogue to teach an AI how real human conversations flow.

Once the AI has learned from that data, it is then able to predict how likely one statement would follow another as a response. In these demos, the AI is simply considering what you type to be an opening statement and looking across a pool of many possible responses to find the ones that would most likely follow.

The technique we're using to teach computers language is called machine learning. Google's Machine Learning Glossary defines machine learning as:

"...a program or system that builds (trains) a predictive model from input data."

What does that mean for us?

Input data: The input data is a billion pairs of statements, where the second statement is a response to the first one.

Predicting: We are predicting the response to a question or a statement. After seeing all those pairs of sentences and responses, the AI learns to identify what a good response might look like.

Model: The trained system that is used for making predictions. After training, our model is able to pick the most likely response from a pool of options.

Verse by Verse

Status: Experiment closed in October 2025.

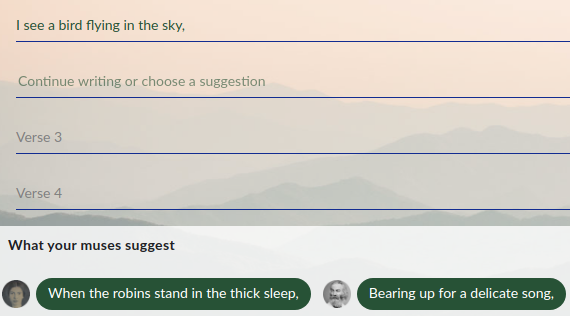

In Verse by Verse, you were given the opportunity to compose poetry in collaboration with classic American poets like Walt Whitman, Emily Dickinson, Edgar Allen Poe, and many more. These poets would act as your muses, making suggestions of verses as you wrote a poem.

In this application, there were two models at work. One model, a generative model, was trained on classic poetry in order to learn how to create novel verses in the style of our cadre of poets. The other model, which used the same semantic understanding technology as our other applications, was trained to semantically understand which verses best follow the previously written line of verse.

Users were able to write poetry with different poets, with each poet having their own individual style. These poets were your partners, suggesting verses as you wrote your poem. Users were free to write their own verses, use the suggestions they received, or use the suggestions as inspirations as writing their own. This allowed for a more collaborative and creative approach to poetry.

If you're looking for an AI collaborator for composing poetry, we suggest checking out gemini.google.com. You'll find that Gemini knows a lot about poetry!

Talk to Books

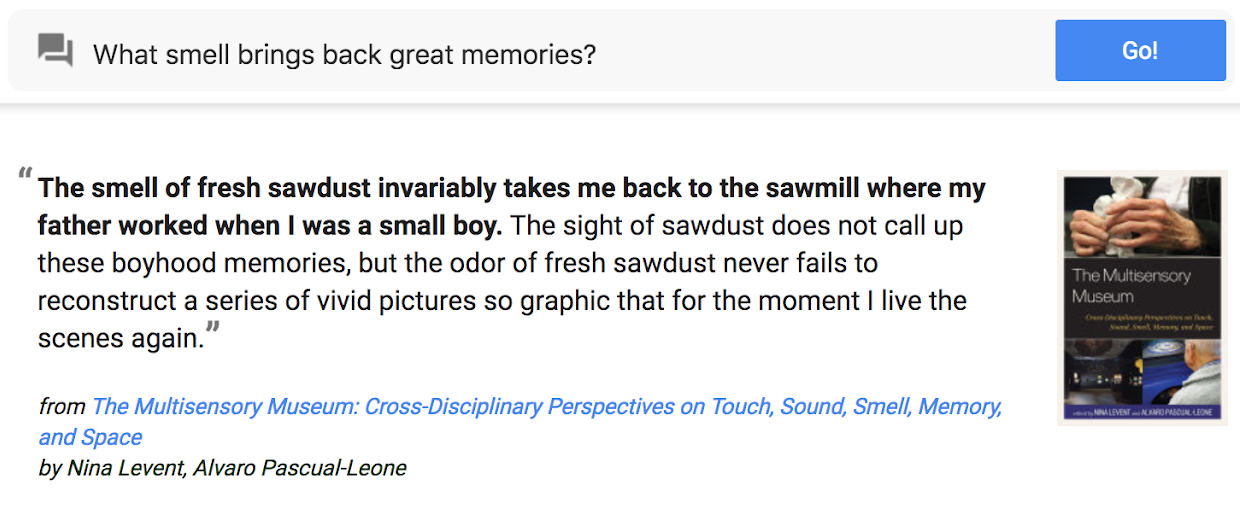

Status: Experiment closed in June 2023.

In Talk to Books, when you typed in a question or a statement, the model looked at every sentence in over 100,000 books to find the responses that would most likely come next in a conversation. The response sentences were shown in bold, along with some of the text that appeared next to the sentence for context.

Although it had a search box, its objectives and underlying technology were fundamentally different than those of a more traditional search experience. It was a demonstration of research that enabled an AI to find statements that looked like probable responses to your input rather than a finely polished tool that would take into account the wide range o standard quality signals. You sometimes needed to play around with it to get the most out of it.

You can still explore our sample queries to get a feel for how Talk to Books used to work. But unfortunately since the experiment is now closed, it is no longer possible to enter new search queries.

If you’re looking for a new kind of AI experience using natural language, we suggest checking out bard.google.com. You’ll find that Bard knows a lot about books!

Semantris

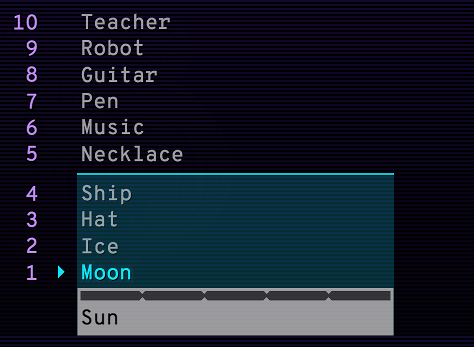

Semantris is a word association game that uses this same technology. Each time you enter a clue, the AI looks at all the words in play and chooses the ones it thinks are most related. Because the AI was trained on conversational text spanning a large variety of topics, it is able to make many types of associations.

In Semantris' Arcade, when the AI sorts the list, the most related words are moved to the bottom. In the example above, you can see that it thinks that the word "Moon" is a better conversational response to "Sun" than "Teacher".

Semantris is similar to other word association games where a person gives clues to help their teammate guess the correct words. However, in Semantris, you give your hints to an AI. Because the AI can sometimes have quirky responses, you'll need to experiment with different types of clues to learn how this AI thinks and to earn the highest scores. Try playing with slang, technical terms, pop culture references, synonyms, antonyms, and even full sentences.

For Developers

Visit For Developers to dive deeper into the technology and use it in your own applications.

We Invite Your Feedback

You may find delightful responses you want to share with us, or have suggestions for improving these demos. You may also see surprising or confusing responses -- or even responses that make you uncomfortable. These are raw research demos. They demonstrate the AI's full capabilities and weaknesses, including how it can reflect human cognitive biases. If you'd like to learn more about bias in language understanding models, visit our For Developers page.

These are imperfect experiments and we are learning from them. We invite your feedback as it helps us improve. Click the exclamation point icon at the top right of each experience to send us your feedback.